Top 10 Analytics And Business Intelligence Trends For 2024

Business Intelligence Trends 2024

8) Natural Language Processing (NLP)

Over the past decade, business intelligence has been revolutionized. Data exploded and became big. And just like that, we all gained access to the cloud. Spreadsheets finally took a backseat to actionable and insightful data visualizations and interactive business dashboards. The rise of self-service analytics democratized the data product chain. Suddenly, advanced analytics wasn’t just for analysts.

2023 was a particularly major year for the business intelligence industry. The trends we presented last year will continue to play out through 2024. But the BI landscape is evolving, and the future of business intelligence is playing now, with emerging trends to watch. In 2024, BI tools and strategies will become increasingly customized. Businesses of all sizes are no longer asking if they need increased access to business intelligence analytics, but what is the best BI solution for their specific needs?

Businesses are no longer wondering if visualizations improve analyses but what is the best way to tell each data story, especially with the help of modern BI dashboard software. 2024 will be the year of data security and discovery: clean and secure data combined with a simple and powerful presentation. It will also be a year of collaborative BI and AI. We are excited to see what this new year will bring. Read on to see our top 10 business intelligence trends for 2024!

Try our BI software 14-days for free & take advantage of your data!

Let’s Discuss The Top Trends In Business Intelligence

1) Artificial Intelligence

We will start analyzing what is new in business intelligence with AI. This trend is wildly being covered by Gartner in their latest Strategic Technology Trends report, combining AI with engineering and hyper-automation and concentrating on the level of security in which AI risks developing vulnerable points of attack.

Artificial intelligence (AI) is the science aiming to make machines execute what is usually done by complex human intelligence. Often seen as the highest foe-friend of the human race in movies (Skynet in Terminator, The Machines of Matrix, or the Master Control Program of Tron), AI is not yet on the verge of destroying us, despite the legit warnings of some reputed scientists and tech-entrepreneurs.

While we work on programs to avoid such inconvenience, AI and machine learning are revolutionizing the way we interact with our analytics and data management, while increments in security measures must be taken into account. The fact is that it is and will affect our lives, whether we like it or not.

It is expected that in the coming year, AI will evolve into a more responsible and scalable technology as organizations will require a lot more from AI-based systems. According to Gartner’s Data and Analytics research for 2021, with COVID-19 completely changing the business landscape, historical data will no longer be the main driver of AI-based technologies. In change, these solutions will need to work with smaller datasets and more adaptive machine learning while also being compliant with new privacy regulations. This concept is known as ethical AI, and it aims to ensure that organizations use AI systems in a way that will not break the law. To this day, many organizations have faced legal issues for illegally collecting user data. The Facebook and Cambridge Analytica scandal is a perfect example of that.

In that sense, implementing systems and models to ensure the correct use of AI-related technologies will become even more important in the coming years. In fact, the US government recently released a blueprint for the “AI Bill of Rights”, presenting 5 principles that should guide the design, use, and deployment of automated systems “to protect the American public in the age of artificial intelligence”.

In response to this increasing need for AI accountability, Gartner presents AI TRiSM as one of the concepts that will help organizations ensure “AI model governance, trustworthiness, fairness, reliability, robustness, efficacy, and data protection”. This cross-functional framework needs to be implemented from the earliest stages of system design and involve people from compliance, legal, IT, and analytics for a successful approach. By 2026, businesses that apply this framework to their AI models are expected to be 50% more successful in adoption, business goals, and user acceptance.

It can’t be denied that AI is still a topic of concern even today. The number of AI-based applications has become so big that many IT professionals don’t even know how to use or interpret them. This leaves the doors open for breaches and financial losses that can significantly impact companies and customers alike. As a response, terms such as explainable AI (XAI) will be at the center of the conversation during 2024. XAI is an emerging field that aims to apply specific processes and methods to allow humans to understand the results and outputs created by machine learning and AI algorithms. The end goal of this field is to ensure trust and transparency with these systems to give humans control over them.

AI-based business analytics

When it comes to analytics, businesses are evolving from static, passive reports of things that have already happened to proactive analytics with dashboards that help them see what is happening at every second and give alerts when something is not how it should be. Solutions such as an AI algorithm based on the most advanced neural networks provide high accuracy in anomaly detection as it learns from historical trends and patterns. That way, any unexpected event will be immediately registered, and the system will notify the user.

Another feature that AI has on offer in BI solutions is the upscaled insights capability. It basically fully analyzes your dataset automatically without needing effort on your end. You simply choose the data source you want to analyze and the column/variable (for instance, revenue) that the algorithm should focus on. Then, calculations will be run and come back to you with growth/trends/forecast, value driver, key segments correlations, anomalies, and what-if analysis. That is an incredible time gain as what is usually handled by a data scientist will be performed by a tool, providing business users with access to high-quality insights and a better understanding of their information, even without a strong IT background.

Time gain is also present in the form of AI assistants. Tools have started to develop AI features that enable users to communicate with the software in plain language - the user types a question or request, and the AI generates the best possible answer. If this is something you are interested in, then keep reading because we will dive into it in more detail later in the post with the natural language processing trend.

The demand for real-time online data analysis software is increasing, and the arrival of the IoT (Internet of Things) also brings a countless amount of data, promoting statistical analysis and management at the top of the priority list. However, businesses today want to go further, and Adaptive AI might be the answer. As stated by Gartner, Adaptive AI systems “support a decision-making framework centered around making faster decisions while remaining flexible to adjust as issues arise”. What makes these systems so interesting for companies today is the fact that they can learn from behavioral patterns and adjust to real-world changes, making it easier to make fast and improved decisions.

In that same realm, Generative AI is another technology that has revolutionized the industry in 2023 and will continue to do so in 2024. It basically enables AI systems to generate text, images, audio, and other types of content based on human-generated input. A famous example of Generative AI is ChatGTP. In 2023, the tool revolutionized the industry with its ability to generate well-written texts based on a short input. However, as with many AI-related innovations, ChatGTP was quickly scrutinized because it could generate biases, copyright infringement, fake news, and more if not used ethically.

From a business perspective, using technologies like Adaptive and Generative AI has facilitated several processes, including data collection, cleaning, and analysis, which can be automated and tailored to the company’s needs. Risk management is another area in which these technologies thrive. Businesses can use Generative AI to predict any kind of fraud or attack, as well as generate risk simulations and test strategies in an imaginary scenario.

Overall, we cannot deny the value of AI and how it has continued to develop over the years. That said, it is fundamental for regulators and decision-makers to ensure ethical and secure measures are being imposed when implementing these systems. It all comes back to security, and we will discuss it in more detail in our next trend.

2) Data Security

As you saw with our extensive AI trend, data and information security have been on everyone’s lips in 2023, and they will continue to buzz the world in 2024. The implementation of privacy regulations such as the GDPR (General Data Protection Regulation) in the EU, the CCPA (California Consumer Privacy Act) in the USA, and the LGPD (General Personal Data Protection Law) in Brazil have set building blocks for data security and management of customers personal information.

Moreover, the recent overturn by the European Court of Justice of the legal framework called Data Privacy Shield hasn't made software companies' lives much easier. The Shield was a legal framework that enabled them to transfer data from the EU to the USA, but with recent legal developments causing the invalidation of the process, companies that have their headquarters in the US don't have the right to transfer any of the EU data subjects.

Actually, a similar situation happened in 2015 when the EU and the USA had no legally valid agreements on this matter for a while. Many US-based (software) providers argue that they use European servers, and there is no data transfer to the US at all. However, from a legal perspective, even this solution is questionable, as, in theory, the US judiciary could force US-based businesses to reveal even data from EU-based servers. In essence, the information that is located in the EU needs to stay in the EU. In practice, that means that EU-based businesses that use in the current situation, US-based software vendors that store any kind of data for them are taking hazards as they operate in a legal grey area. For companies such as datapine, this doesn't represent a big issue since the registration, business, and servers are located in the EU.

Taking all this into account, businesses have been forced to invest in security to stay compliant with the new regulations and also to protect themselves from cybercrime. In fact, global spending on cybersecurity products is expected to reach $1.75 trillion in the next 5 years. This is not a surprise to the experts as, during 2020 and the beginning of COVID-19, companies of all sizes were forced to mutate from physical to digital, and, to accelerate the transformation, they relied on online services, leaving a gap for cybercriminals to attack. According to the 2023 KPMG CEO Outlook Pulse survey, cybersecurity is among the top 10 “risks to growth” topics for CEOs in the coming years. Even more concerning is that 27% of the surveyed CEOs admit to being unprepared for a potential attack, which increased compared to 24% in the previous year.

This might change now that company boards recognize cybersecurity as an overall business risk more than an IT-related issue. According to Gartner's Cybersecurity Predictions for 2023-2024, by 2026, 70% of boards will include one member with cybersecurity expertise.

Amongst the measures organizations are taking in the coming years, we will see an increase in adopting the Zero Trust framework. Zero Trust doesn’t describe a specific technology but an approach in which businesses remove the “implicit trust” from all computing infrastructure by verifying every stage of digital interaction from devices to users, regardless of location. This means every user who wants to interact with the company's systems needs to be validated and verified. According to Gartner, by 2026, 10% of large enterprises will have a “comprehensible, mature and measurable” Zero Trust program in place, compared to the less than 1% that have one today. However, almost half of them might fail as a Zero Trust approach requires full organizational involvement and connection to business goals to succeed.

The concern in cybersecurity also presents a challenge for SaaS BI tools as they need to ensure they offer a secure product that clients will trust with their sensitive data. Like any other cloud BI solution, online business intelligence software is also subjected to security risks. Some of them include processing data quickly to provide real-time insights that might be subjected to regulatory compliance, vulnerabilities when moving data from user’s systems to the BI tool’s cloud, or when the tool provides access to data from multiple devices that may be unsafe and exposed to attacks. To prevent any of this from happening, BI software needs to have a clear focus on security.

One of the latest trends in business intelligence to help SaaS BI solutions stay safe is cybersecurity mesh architecture. Cybersecurity mesh is a composable and scalable security control that protects digital assets that reside in applications, in the cloud, IoT, and others. It seeks to establish a defined security perimeter around a person or a specific point with a more modular approach, enabling users to securely access data from their smartphones. One of Gartner's cybersecurity predictions for 2021-2022 stated that by the end of 2024, organizations adopting cybersecurity mesh architecture will reduce the financial impact of security incidents by around 90%. Since data breaches have been regularly in the news, buzzing industries, and average users, the demand for security products and services is understandable.

With these security threats increasing, businesses must adopt an organizational approach to protect their data. That is why data governance will remain one of the hottest topics related to security in 2024. This concept refers to a set of processes, policies, and roles that ensure appropriate valuation, creation, consumption, and control of business data at a strategic, tactical, and operational level. It establishes roles and responsibilities regarding who can manipulate the data, in which situation, and with what tools and methods to ensure a secure and efficient data management process.

In the past years, due to tighter regulations, such as GDPR, organizations were obligated to ensure a secure environment for sensitive data, enhancing the need for stronger governance processes. As we mentioned earlier, companies of all sizes are exposed to attacks and breaches, leaving massive amounts of sensitive information from customers, suppliers, employees, and more exposed to misuse. In that sense, implementing a well-crafted governance plan will help organizations comply with government regulations while setting the perfect environment to use quality data and achieve their goals.

In today's highly competitive business environment, where data collection keeps growing every second, data governance becomes a mandatory practice. A well-implemented governance framework not only assists organizations in staying compliant but also in minimizing risks, reducing costs, improving communication from an internal and external point of view, and achieving strategic goals, among other things.

3) Data Discovery/Visualization

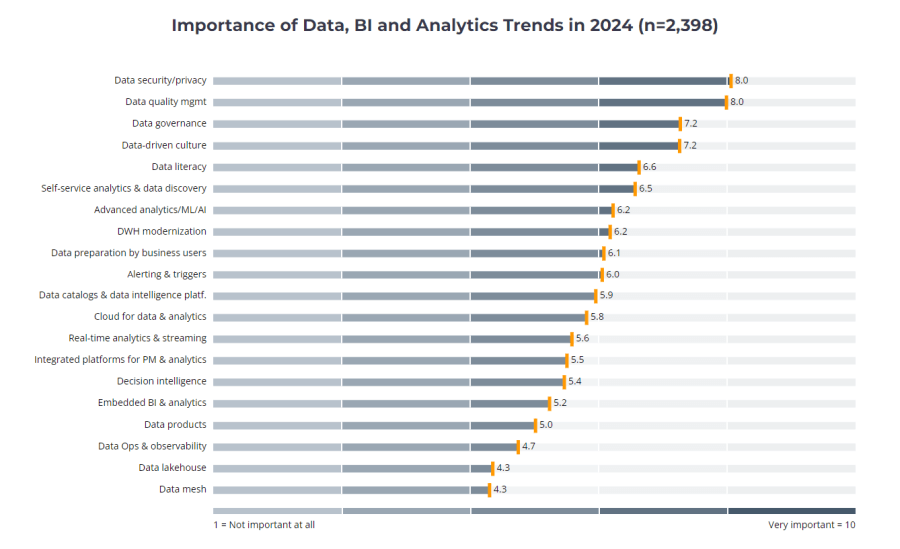

Data discovery using visuals has opened the analytical doors to a wider audience, and it is expected to keep growing in the coming years. As stated by a survey conducted by the Business Application Research Center, data discovery was already listed in the top 6 business intelligence trends by the importance hierarchy for 2023 and is expected to keep growing in 2024. BI practitioners steadily show that the empowerment of business users is a strong and consistent trend.

*Source: Business Application Research Center (click image to enlarge)*

Essentially, data discovery is the process of collecting data from various internal and external sources and using advanced visual analytics tools to consolidate all the information. This allows businesses to engage every relevant stakeholder with the information by empowering them to intuitively analyze and manipulate it and extract actionable insights. To achieve this, businesses of all sizes turn to modern solutions such as business intelligence tools that offer data integration, interactive visualizations, a user-friendly interface, and the flexibility to work with big amounts of data efficiently and intuitively.

An essential element to consider is that data discovery tools depend upon a process, and the generated findings will bring business value. It requires understanding the relationship between data through data preparation, visual analysis, and guided advanced analytics. “The high demand for data discovery solutions reflects a huge shift in the BI world towards increased data usage and the extraction of insights,” the Research Center emphasizes. Using online data visualization tools to perform those actions is an invaluable resource for producing relevant insights and creating a sustainable decision-making process. That being said, business users require software that is:

- Easy to use

- Agile and flexible

- Reduces time to insight

- Allows easy handling of a high volume and variety of data

Discovering trends in business operations that you didn’t even know existed or enabling immediate actions when a business anomaly occurs have become invaluable tools in effectively managing businesses of all sizes.

Data visualization has evolved into a state-of-the-art solution to present and interact with numerous graphics on a single screen, whether it's focused on developing sales charts or comprehensive interactive reports. The point is that data discovery is a process that enables decision-makers to reveal insights, and by using visualizations, teams have the chance to spot trends and major outliers within minutes.

In 2024, the dashboard will continue to be a major visual communication tool that will enhance collaboration between teams by being the analytical hub of a project. But more than just a visualization tool, KPI dashboards will take their interactivity features to the next level with technologies such as AI-based alarms and real-time data. Since humans process visual information better, the data discovery trend will be one of the most important BI trends in 2024.

4) D&A Sustainability

Moving on with our list of the new trends in business intelligence, we have data and analytics (D&A) sustainability. The topic, also mentioned in Gartner’s 2023 Data and Analytics Trends, is one of the most important ones we will discuss in this post, as climate change remains a global concern for the next years.

In recent years, businesses started diving into sustainability mostly as a marketing tactic to brand themselves as “conscious”. As the topic becomes increasingly important, with new regulations forcing organizations to report on their ESG initiatives, decision-makers have realized that sustainability also represents a big way to reduce operating costs and increase overall profitability and efficiency. That is where D&A sustainability comes into the picture.

Now that businesses of all sizes and across industries have realized the hidden potential of sustainability, we will start to see many using data and analytics as a way to boost their strategies and make the most out of their efforts. By tracking important metrics like energy consumption, gas emissions, labor rights, supply chain performance, and others, organizations can extract valuable insights to guide their sustainability journey.

In 2024 and beyond, we can expect organizations to use D&A sustainability to anticipate changes in demand and adjust their resource purchases and usage to be more financially intelligent. However, we will also see other factors coming into play besides just purely resource-related data. Production levels, sales volume, employee headcounts, and even weather data will help paint a more accurate picture to facilitate real-time decision-making.

We can also expect to see different tools emerge to help track sustainability data from a past, present, and future perspective, providing a big competitive advantage for companies that manage to adopt it correctly. That being said, ensuring all employees and relevant stakeholders are involved in the process is also necessary. Implementing training instances to engage employees with the process is a good way to start.

Linking ESG initiatives to business outcomes is not an easy task. As of today, sustainability analytics is valuable for three main reasons: the first one is to stay compliant with the law, the second one is to track the performance of ESG goals, and the third one is to uncover new opportunities to keep integrating sustainability into operations. Organizational leaders must take charge to ensure all these aspects are covered and supported with the best tools and technologies.

It is no secret that sustainability has transitioned from a buzzword to a mandatory practice in the business world. It is a growing trend that we will see everywhere in 2024 and many more years to come.

Try our BI software 14-days for free & take advantage of your data!

5) Data Sharing

Data and analytics have become a business’s most valuable competitive asset. Making informed decisions based on accurate insights can skyrocket success to a whole new level. That being said, analyzing data and extracting insights is not enough. Especially considering how accessible it has become to extract and manage valuable business data. To really extract the maximum potential out of your analytical journey, it is necessary to ensure full organizational adoption through powerful data sharing practices, which leads us to our next trend.

Gartner already identified data sharing as one of the top 10 data and analytics trends for 2023. Stating that businesses that implement efficient data sharing processes with internal and external stakeholders will outperform their competitors on most business value metrics.

While the importance of data sharing might seem obvious to some, it presents a challenge for most organizations as, for decades, it was the norm to say, “don’t share data unless…”. The issue is that in today’s context, where most businesses are undergoing digital transformations, not sharing data can be detrimental, as everyone across the company needs to be united to connect analytics to general business goals. In that sense, Gartner advises organizations to switch their mindset to “must share data unless..”. Doing so will enable more robust data and analytics strategies, empowering stakeholders to make agile and informed decisions.

Changing the mindset might not be easy, and organizations that don’t take it seriously might fail in the process. Gartner suggests establishing trust-based mechanisms to ensure decision-makers trust the data they collect and use to inform their strategies. This way, they will feel confident in using it, sharing it, and re-sharing it with those who might need it. This can be easily done by tracking data quality metrics and implementing catalogs to compile all the information related to the trustworthiness of the data.

When discussing data sharing, the term "self-service BI” quickly pops up because those solutions do not require an IT team to access, interpret, and understand all the data. These online BI tools make sharing easier by generating automated reports that can be scheduled at specific times and to specific people. For instance, they enable you to set up business intelligence alerts and share public or embedded dashboards with a flexible level of interactivity. All these possibilities are accessible on all devices, which enhances the decision-making and problem-solving processes critical for today's ever-changing environment. This is especially necessary now that the pandemic has forced businesses to shift to a home office dynamic in which collaboration needs to be supported by the right tools more than ever.

Collaborative information, information enhancement, and collaborative decision-making are the key focus of new BI solutions. However, data sharing does not only occur around the exchange or updates of some documents. It has to track the progress of meetings, calls, e-mail exchanges, and ideas collection. More recent insights predict that collaborative business intelligence will become more connected to greater systems and larger sets of users. The team’s performance will be affected, and the decision-making process will thrive in this new concept.

In fact, it is expected that, in 2024, data sharing will move further from just sharing insights and will start from earlier stages. Starting from data exploration and spreading across the entire analytical workflow for a more efficient decision-making process that includes every stakeholder, regardless of location. This last point is especially important when considering the growing security concerns many businesses face today. Implementing a collaborative BI approach enables every stakeholder and data user to be accountable for the decisions he or she makes, ensuring a more secure workflow.

In response to all these changes, data analytics and BI providers are prioritizing collaboration for 2024, introducing multiple capabilities that connect users at every stage of their work and with a level of interactivity that breaks the barriers between data and analytics and the different business functions. A recent survey shows that 75% of executives say their business functions are competing rather than collaborating. This presents a major challenge, especially for companies still undergoing a digital transformation due to the pandemic. By implementing a collaborative approach supported by the right tools and processes, developers and average business users are expected to work together under the same analytics umbrella, enabling more united communication and a productive work environment. Let’s see how it will be developed in the business intelligence trends topics of 2024.

6) Continuous Intelligence

Next, in our list of trends in data analytics, we will talk about continuous intelligence (CI). Gartner defines the concept as a “design pattern in which real-time analytics are integrated into business operations, processing current and historical data to prescribe actions in response to business moments and other events”.

It basically describes the use of tools and processes to facilitate the integration of real-time analytics into business operations with the help of augmented analytics. Traditionally, the analytical process has relied on predefined metrics that are tracked on specified schedules. CI is a machine-driven approach that automates the extraction of data insights no matter how many data sources or massive volumes of data need to be handled, providing businesses with a continuous and frictionless flow of real-time insights.

The concept was born out of a necessity for an integrated analytical approach to keep up with modern organizations' demands in the digital revolution. Having data silos and decentralized analytical processes can only lead to a waste of resources and valuable time. With continuous intelligence, organizations can go further from analyzing static metrics that must be constantly updated to being able to identify trends, growth opportunities, and anomalies that might remain hidden otherwise.

So, it is clear that all CI applications have real-time analysis at their core. However, historical data also plays a pivotal role in the process. For example, you might be a manufacturing company analyzing machine performance in real time and realize, in just seconds, that a specific part of the machine is about to fail, which can help you implement corrective measures immediately. Complementing the live data you just got, you can use historical data to understand how many times this same machine has failed in the past few weeks, months, or even years. Allowing you to get a 360-degree view and make the most efficient decisions.

CI tools are expected to offer automated, real-time data ingestion, simplify and unify data collection, management, and analysis, and use advanced in-memory technology to store and manage historical information. Combining these solutions will give businesses the power to optimize their day-to-day operations by spending less time shifting through massive amounts of data and more time focusing on what really matters. Plus, they can significantly accelerate the time to action in any business scenario thanks to various features, like dynamic alerting and event triggering powered by AI and ML algorithms.

In 2024 and the years ahead, we can expect more and more organizations to adopt CI technologies to make smarter decisions live and with less manual work. CI offers a shift from traditional BI processes based on curated historical data to AI-driven augmented analytics with real-time insights that allow for efficient and agile responses to unexpected events.

7) Data Literacy

As data becomes the foundation of strategic decisions for businesses of all sizes, understanding and using this data as a collaborative tool that everyone in the organization can use becomes critical for success. That said, data literacy will be one of the relevant data analytics trends to look out for in 2024.

Data literacy is defined as the ability to understand, read, write, and communicate data in a specific context. This means understanding the techniques and methods used to analyze the data as well as the tools and technologies implemented. According to Gartner, poor data literacy is listed as the second-biggest roadblock to the success of the CDO’s office, and it adds that by 2024, data literacy will become essential in driving business value.

Even with the rise of self-service tools that are accessible to everyone, data literacy continues to be the foundation of a successful data-driven culture. Business leaders are responsible for providing the needed training and tools to the entire organization to empower everyone to work with data and analytics. To achieve a successful data literacy process, a careful assessment of the skills of employees and managers needs to be made in order to identify weak spots and gaps. Gartner recommends starting by identifying fluent data users that can serve as “mediators” for non-skilled groups as well as identifying communication barriers where data is failing its purpose. With all this knowledge in hand, the creation of targeted training instances will become an easier task.

In the long run, with the proper training and the right tools, users from all levels of knowledge will be able to perform advanced analysis and use data as their main language. With technologies such as predictive analytics becoming accessible for regular users, data science will no longer need to be performed by experts- shifting these professionals to focus on other advanced tasks such as Machine Learning or MLOps. In fact, according to Gartner, it is expected that by 2025, the shortage of data scientists will no longer be an obstacle to businesses adopting advanced technological processes. That said, data literacy will be one of the most important business intelligence market trends in the coming year.

8) Natural Language Processing (NLP)

Natural Language Processing (NLP) is one of the recent trends in business intelligence that is revolutionizing how companies approach their analytical processes. Considered amongst the most powerful branches of AI, NLP enables computers and machines to understand, learn from, and interpret human language in a spoken or written form, and it can be divided into two subsets: natural language understanding (NLU) and natural language generation (NLG). NLU focuses on understanding the meaning behind text and speech, while NLG focuses on text generation based on specific data input.

The growth of this trend has been such in the past years that its $3 billion worldwide market revenue from 2017 is expected to be almost 14 times larger by 2025, reaching $43 billion, according to research by Statista. This is not surprising as language-processing applications are already present in our daily lives in the shape of car navigation systems, smart voice assistants like Siri or Alexa, autocomplete text features on our phones, and translation apps, just to name a few.

Considering all of that, it is not surprising that businesses have begun to adopt this technology to manage the large amounts of unstructured text data they gather from different sources such as emails, social media, or surveys. As a response, multiple BI software providers offer their users language insight features. There are two major use cases for which language processing is becoming increasingly popular in the BI industry. Let’s look at them in more detail below:

BI data assistant: Similar to the chatbots we see on multiple websites today, a data assistant is integrated into BI software to answer any analytical questions that a user might have. All you need to do is write a question in human language, and the assistant will provide you with the answer. As the technology matured in the past years, AI-based assistants went from simply showing search results for users to analyze to being able to filter and organize the data to generate analytical insights as an answer. This development has also helped democratize data as non-technical users can simply type a question, and the software will automatically show them an answer without needing complicated calculations or analysis.

Sentiment analysis: Also known as opinion mining, it is the process of analyzing text data to identify the emotional tone behind it. Businesses often use it to analyze comments on social media, emails, blog posts, webchats, and more and define if the tone of what is being said is negative, positive, or neutral. Through this, organizations can extract useful insights regarding product development and brand positioning, as well as understand pain points to improve the customer experience on different touch points.

NPL is one of the business intelligence emerging trends we will see developing in multiple areas over the coming years. BI software that exploits this capability with a self-service approach will gain a competitive advantage by allowing users to conduct efficient analysis without the need for any calculations. We will definitely be watching how this technology develops in 2024.

9) Predictive & Prescriptive Analytics Tools

Business analytics of tomorrow is focused on the future and tries to answer the question: what will happen? How can we make it happen? Accordingly, predictive and prescriptive analytics are by far the most discussed business analytics trends among BI professionals, especially since big data is becoming the main focus of analytics processes being leveraged by big enterprises and small and medium-sized businesses.

Predictive analytics is the practice of extracting information from existing data sets to forecast future probabilities. It’s an extension of data mining that refers only to past data. Predictive analytics includes estimated future data and, therefore, always includes the possibility of errors from its definition, although those errors steadily decrease as software that manages large volumes of data today becomes smarter and more efficient. Predictive analytics indicates what might happen in the future with an acceptable level of reliability, including a few alternative scenarios and risk assessments. Applied to business, predictive analytics is used to analyze current data and historical facts to better understand customers, products, and partners and to identify potential risks and opportunities for a company.

Industries harness predictive analytics in different ways. Airlines use it to decide how many tickets to sell at each price for a flight. Hotels try to predict the number of guests they can expect on any given night to adjust prices to maximize occupancy and increase revenue. Marketers determine customer responses or purchases and set up cross-sell opportunities. In contrast, bankers use it to generate a credit score – the number generated by a predictive model that incorporates all the data relevant to a person’s creditworthiness. There are plenty of big data examples used in real life, shaping our world, be it in the buying experience or managing customers’ data.

Predictive analytics must also become accessible for everyone, and in 2024, we will witness even more relevance that will cater to that notion. Self-service analytical possibilities are becoming a criterion for BI vendors and companies alike; both can profit from it and bring more value to their businesses. The predictive models, in practice, use mathematical models, in other words, forecast engines, to predict future happenings. Users simply select past data points, and the software automatically calculates predictions based on historical and current data, as shown in the example:

**click to enlarge**

Among different predictive analytics methods, two are quite popular among data scientists: artificial neural networks (ANN) and autoregressive integrated moving averages (ARIMA).

In artificial neural networks, data is processed in a similar way as in biological neurons. Technology duplicates biology: information flows into the mathematical neuron, is processed by it, and the results flow out. This single process becomes a mathematical formula that is repeated multiple times. As in the human brain, the power of neural networks lies in their capability to connect sets of neurons together in layers and create a multidimensional network. The input to the second layer is from the output of the first layer, and the situation repeats itself with every layer. This procedure allows for capturing associations or discovering regularities within a set of patterns with a considerable volume, number of variables, or diversity of the data.

ARIMA is a model used for time series analysis that applies data from the past to model the existing data and make predictions about the future. The analysis includes inspection of the autocorrelations – comparing how the current data values depend on past values – especially choosing how many steps into the past should be considered when making predictions. Each part of ARIMA takes care of different sides of model creation – the autoregressive part (AR) tries to estimate the current value by considering the previous one. Any difference between predicted data and real value is used by the moving average (MA) part. We can check if these values are normal, random, and stationary – with constant variation. Any deviations in these points can bring insight into the data series behavior, predict new anomalies, or help to discover underlying patterns not visible by the bare eye. ARIMA techniques are complex, and concluding the results may not be as straightforward as for more basic statistical analysis approaches. However, once the basic principles are grasped, the ARIMA provides a powerful predictive analysis tool.

Prescriptive analytics goes a step further into the future. It examines data or content to determine what decisions should be made and which steps are taken to achieve an intended goal. It is characterized by techniques such as graph analysis, simulation, complex event processing, neural networks, recommendation engines, heuristics, and machine learning. Prescriptive analytics tries to see what the effect of future decisions will be to adjust the decisions before they are actually made. This greatly improves decision-making, as future outcomes are considered in the prediction. Prescriptive analytics can help you optimize scheduling, production, inventory, and supply chain design to deliver what your customers want in the most optimized way, and these are some of the emerging trends in business intelligence 2024 that we will hear more about.

10) Embedded Analytics

When data analytics occurs within a user’s natural workflow, embedded analytics is the name of the game. Businesses have recognized the potential of embedding various BI components, such as dashboards or reports, into their own application, thus improving their decision-making processes and increasing productivity. Formerly strangled by spreadsheets, companies have realized how utilizing embedded dashboards enables them to provide higher value within their own applications. In fact, according to Allied Market research, the embedded analytics market is projected to reach $77.52 BN by 2026, with a CAGR of 13.6% from 2017 to 2023, and this is one of the business analytics topics we will hear even more in 2024.

Whether you need to create a sales report or send multiple dashboards to clients, embedded analytics is becoming a standard in business operations. In 2024, we will see even more companies adopting it. Departments and company owners seek professional solutions to present their data without building their own software. By simply white labeling the chosen application, organizations can achieve a polished presentation and reporting they can offer consumers.

More than just embedding a dashboard or BI features in an application, embedding analytics allows for collaboration by keeping every single stakeholder involved. By allowing clients and employees to manipulate the data in a well-known environment, you facilitate the extraction of insights from every area of your business. This makes it one of the fastest-growing business intelligence trends on this list.

Business Wire recently published a report called “Global Embedded Analytics Market (2021 to 2026) - Growth, Trends, COVID-19 Impact, and Forecasts,” in which they mention that “organizations are deploying embedded analytics solutions to realize significant gains in revenue growth, marketplace expansion, and competitive advantage.” They also add that embedding analytics will grow significantly in the healthcare industry in the coming years. Considering the massive amounts of data that hospitals collect, which got even bigger with COVID-19 and telemedicine interactions, healthcare businesses “switch from paying for service volume toward service value”. By using powerful healthcare analytics software that can be embedded, hospital managers can extract valuable insights that will help them optimize processes from a clinical, operational, and financial point of view.

This is one of the trends in business analytics that can be implemented immediately since many vendors already offer this opportunity and ensure that the application works seamlessly and without much complexity.

Try our BI software 14-days for free & take advantage of your data!

What Are The Analytics & Business Intelligence Trends For 2024?

We’ve summed up in this article what the near future of business intelligence looks like for us. Here are the top 10 analytics and business intelligence trends we will talk about in 2024:

- Artificial Intelligence

- Data Security

- Data Discovery/Visualization

- D&A Sustainability

- Data Sharing

- Continuous Intelligence

- Data Literacy

- Natural Language Processing

- Predictive And Prescriptive Analytics Tools

- Embedded Analytics

Become Data-driven In 2024!

Being data-driven is no longer an ideal; it is an expectation in the modern business world. 2024 will be an exciting year of looking past all the hype and moving towards extracting the maximum value from state-of-the-art online business intelligence software. We hope you enjoyed this overview, and stay tuned for more business intelligence industry trends!

If you’re ready to start your business intelligence journey, or keep up with the 2024 trends, trying our software for a 14-day trial will do the trick! And it’s completely free!